Your AI Vendor Does Not Have You Covered. We Read the Fine Print.

Your legal team signed the enterprise agreement.

The vendor's logo is on your homepage.

The board received a briefing.

You are covered.

The contract says otherwise.

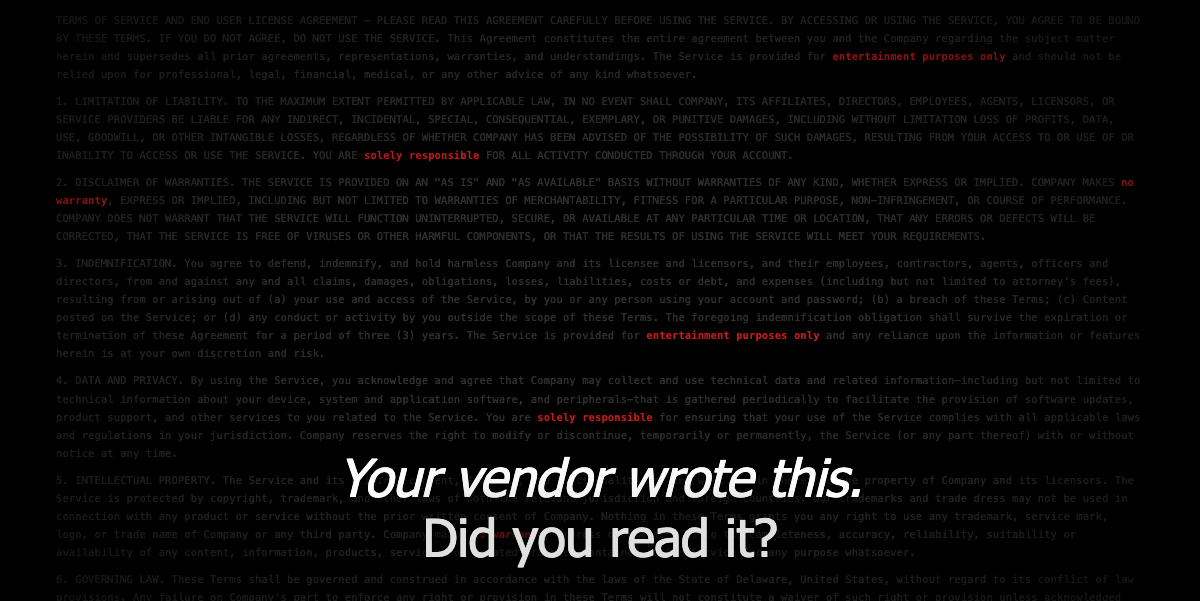

In April 2026, TechCrunch, Tom's Hardware, and The Next Web all reported the same disclosure: Microsoft's Copilot Terms of Use, updated October 2025, classify the product as 'for entertainment purposes only.' This is the same product Microsoft charges up to $30 per user per month for. The same product embedded into Windows, Word, Excel, and Teams. The marketing says one thing. The legal terms say another. Your organization operates under the legal terms.

What Do AI Vendor Contracts Actually Say About Liability?

Microsoft Copilot Terms of Use, available at microsoft.com/en-us/microsoft-copilot/for-individuals/termsofuse, include this language under a section titled IMPORTANT DISCLOSURES AND WARNINGS in bold capitals:

"Copilot is for entertainment purposes only. It can make mistakes, and it may not work as intended. Do not rely on Copilot for important advice. Use Copilot at your own risk." -- Microsoft Copilot Terms of Use, October 2025

The same Terms state: 'When you request that Copilot take Actions on your behalf, you are solely responsible for those Actions and any results or consequences.' Microsoft also makes no warranty that Copilot outputs are free from copyright, trademark, or privacy rights infringement.

Atlassian's AI Terms, published at atlassian.com/legal/ai-terms, state that Atlassian 'makes no warranty as to the accuracy, completeness or reliability of Output or that it does not violate third-party rights or Law.' The customer is 'responsible for its use of Output, including determining whether Output is appropriate for that use.' The liability cap for all AI output claims is capped at the lower of 12 months of product fees paid or $1,000,000, regardless of the actual damage caused.

That $1 million ceiling is not protection for your organization. It is a ceiling on how little Atlassian owes you.

Sources: Microsoft Copilot Terms of Use (October 2025), microsoft.com/en-us/microsoft-copilot/for-individuals/termsofuse; Atlassian AI Terms, atlassian.com/legal/ai-terms; TechCrunch, April 5 2026; Tom's Hardware, April 2026.

"97% of organizations that experienced AI-related data breaches had no proper AI access controls in place." -- IBM Cost of a Data Breach Report, 2025

What Are the Three Liability Gaps These Terms Create?

Gap one: Output Liability. Your vendor provides the model. You provide the use case, the data, and the deployment decision. Both Microsoft and Atlassian make this explicit in their published terms. When AI output causes harm, the deployment decision and its consequences belong to the organization that made it.

Gap two: Regulatory Compliance. The EU AI Act, which entered into force August 2024, classifies AI systems by risk level and assigns compliance obligations specifically to 'deployers,' the organizations putting AI into operational use. Fines reach up to 35 million euros or 7% of global annual turnover for high-risk violations. Your AI vendor is not the deployer under the Act's definition. You are.

Gap three: Incident Response Ownership. In May 2025, the US District Court for the Northern District of California certified Mobley v. Workday, Inc. as a class action, establishing that AI vendors can face direct liability as 'agents' of the deploying organization under federal anti-discrimination law. This ruling cuts both ways: if vendors can be agents, deploying organizations are the principals, responsible for what their deployed AI systems do. When the public failure arrives, your vendor will not draft your regulatory filings or testify at your hearing.

Sources: EU AI Act (Regulation 2024/1689, Article 99); Mobley v. Workday, Inc., N.D. Cal., class action certified May 2025, case no. 23-cv-00770-RFL.

Does Having an AI Policy Cover Your Organization?

No. A policy is a statement of intent; it’s not a governance system.

The 2025 AI Governance Survey, conducted by Pacific AI across 351 participating organizations, found that 75% have formal AI usage policies. Only 54% maintain incident response playbooks. Only 59% have dedicated governance roles. A policy without a tested incident protocol is a document waiting to fail.

Regulators and plaintiffs don’t ask whether you had a policy. No, they are going to ask what controls you had in place, who owned the decision, what monitoring existed, and what your incident response looked like. And each of those questions require evidence and evidence requires a governance system, not a statement.

What Should You Do Before Your Next Vendor Renewal?

First: Read the liability sections of your current AI vendor agreements. We’ve been saying this about reading BEFORE you download the app and that goes for your AI vendor agreements as well. Search for 'limitation of liability,' 'warranty disclaimer,' and 'acceptable use policy.' Then map exactly where vendor responsibility ends and your organization's liability begins. The language is there. Most procurement teams have never located it, mapped it to identify exposure. At the end of the day, the shared responsibility model of cloud doesn’t s

Second: Classify every AI deployment by risk not just features. The EU AI Act defines high-risk AI as systems used in employment decisions, credit scoring, healthcare, and educational assessment. The EEOC has stated that employers cannot outsource anti-discrimination obligations to AI vendors, a principle Mobley v. Workday is establishing in federal case law. Know which of your deployments are high-risk before a regulator does.

Third: Build the governance infrastructure that fills the gap. A certified audit trail, continuous model monitoring, and ISO 42001 certification create the independently verified record of reasonable care that no vendor contract provides. When the question arrives, and it will, the answer needs to already exist in your governance documentation.

The Bottom Line

Microsoft classified their product as entertainment. Atlassian capped their AI liability at 1 million dollars. Both companies are selling tools they have explicitly declined to stand behind in their published legal terms.

This is not a criticism of either company. No, it’s just a structure you must understand before your next AI deployment decision.

Fusion Collective has audited hundreds of AI systems and the gap between what your vendor covers and what you owe regulators is not an edge case. It is the standard position for organizations that have not yet built a governance program.

Fact of the matter is this; your vendor built the model, but YOU made the business decision to deploy it. This requires you to own that decision with the documentation it requires.

Start building your governance program at www.fusioncollective.net

Share this article

Related Articles

The Reskilling Illusion: When AI Transformation Means "You're Fired"

Oct 03, 2025