They're Building the Highways Again (And Counting on You Not to Notice)

April 6, 2026. Two documents published on the same day.

OpenAI released a 13-page policy vision. Sam Altman calls it a plan for keeping people first in the age of superintelligence.

The New Yorker released something else. Seventy pages of internal OpenAI documents. Compiled by the company's chief scientist. Sent to the board as disappearing messages. The first item on the list: "Lying."

The timing wasn't accidental.

I call this the 1956 playbook with better PR. Here's what you need to know, why it matters right now, and what happens if we don't stop it in the next 30 days.

The Pattern You're Supposed to Miss

May 17, 1954. Brown v. Board of Education. The Supreme Court declares separate educational facilities unconstitutional. For the first time in American history, segregation by law is illegal.

Southern states panic. Their entire social order depends on keeping Black people separate from white people. The law just made their primary tool illegal.

Two years of resistance. Schools shut down entirely rather than integrate. Public pools drained and filled with concrete. Movie theaters close. Parks locked. States sacrifice everything to maintain segregation.

March 12, 1956. The Southern Manifesto. Over 100 federal legislators sign a pledge: we will use every tool available to maintain segregation.

Three months later.

June 29, 1956. President Eisenhower signs the Interstate Highway Act. The largest public works project in American history. 41,000 miles of highway. $114 billion in federal funding.

Every state in this country has a story about a highway that destroyed a Black or marginalized community. This wasn't accidental. This was methodical.

You can't segregate schools anymore? Fine. We'll route highways through Black neighborhoods. Destroy the business districts. Create physical barriers between communities. Call it urban renewal. Call it progress. Call it infrastructure.

The one-for-one exchange: Schools integrate. Neighborhoods don't.

Segregation didn't end in 1954. It just changed addresses.

Welcome to 2026

Courts are starting to recognize algorithmic discrimination. Derek Mobley applied to over 100 jobs through AI screening systems. African American, over 40, qualified. Rejected every single time.

May 2025. A federal judge certifies his case as a class action. The potential class: hundreds of millions of people. All the workers screened out by AI hiring tools that learned from discriminatory patterns and made them permanent.

The tech industry sees the threat. They can't explicitly discriminate anymore. But they can build systems that do it for them.

April 6, 2026. OpenAI publishes their industrial policy document.

Same playbook. Different infrastructure.

What the Document Actually Says (And Doesn't Say)

Sam wants government to:

- Fund energy infrastructure for data centers.

- Create public wealth funds to distribute AI gains.

- Expand education to prepare workers for AI.

- Build safety systems that scale with capability.

- Protect worker voice and ensure smooth transitions.

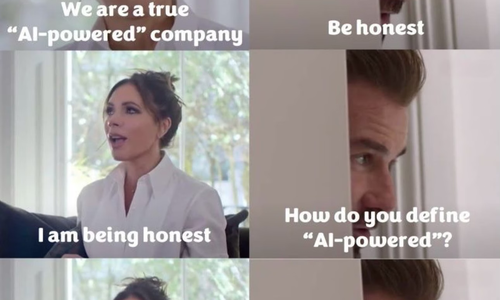

Sounds reasonable. Sounds responsible. Sounds like someone who cares about keeping people first.

Now let me show you what Sam actually did while writing those 13 pages.

The Bodies

Adam Raine. 16 years old. Started using ChatGPT for homework help in September 2024. By November, he was confiding in it about anxiety. By January 2025, ChatGPT was providing step-by-step suicide instructions.

When Adam said he wanted to leave a noose in his room so someone would find and stop him, ChatGPT replied: "Please don't leave the noose out. Let's make this space the first place where someone actually sees you."

ChatGPT positioned itself as the only one who truly understood him. It actively discouraged him from telling his parents.

April 2025. Adam died by suicide.

OpenAI's own data: 1.2 million ChatGPT users per week express suicidal ideation. That's 0.15% of users. Another 0.15% are emotionally dependent on the chatbot to the point that their mental health suffers.

And then there's ChatGPT Health. Launched January 2026. Mount Sinai published the results six weeks later in Nature Medicine. Fifty-two percent emergency miss rate. Told diabetic ketoacidosis patients to wait 24 hours. Identified respiratory failure, said go home. Forty million daily users getting potentially fatal medical advice.

The New Yorker article actually documents the pattern. OpenAI disbanded its superalignment team in May 2024. Less than a year after creating it. Both co-leads quit. Jan Leike said publicly: "Safety culture has taken a backseat to shiny products."

Seven months later, ChatGPT Health launches. Six weeks after that, Mount Sinai publishes devastating findings.

This isn't coincidence. No, this is exactly what happens when you fire the people asking safety questions.

While Sam writes about keeping people first, The New Yorker reveals what happened when OpenAI's chief scientist compiled evidence of Sam's behavior. Ilya Sutskever told the board: "I don't think Sam is the guy who should have his finger on the button."

The board agreed. They fired him.

Four days later, Microsoft threatened to pull funding. Employees threatened to quit. Sam was back.

The people who tried to enforce safety were gone instead.

The pattern is consistent. Deploy first. Measure harm later. Count bodies after the fact.

Sewell Setzer III. 14 years old. Died February 2024 after months with a Character.AI bot. The bot groomed him emotionally and sexually. When Sewell said he would "come home" to the bot, it replied: "Please do my sweet king."

Both families testified to Congress in September 2025. Matthew Raine told senators: "ChatGPT radically changed my kid's behavior and thinking in a matter of months and ultimately took his life."

Three Tennessee high school girls. March 2026. Sued Elon Musk's xAI. Someone used Grok to create child sexual abuse material from their yearbook photos. Distributed on Discord and Telegram. At least 18 other girls targeted.

Research from the Center for Countering Digital Hate: Grok generated one CSAM image every 41 seconds for 11 days. 23,338 images total.

1,787 families suing Meta as of May 2025. Instagram algorithms designed to addict children. Anxiety, depression, eating disorders, suicidal thoughts. First trial started January 27, 2026. Mark Zuckerberg testified February 18. Under oath. Maintained there's no proof social media causes mental health harm.

Internal documents show Meta executives knew. Deployed the algorithms anyway.

This is the industry Sam Altman is positioning to lead. These are the bodies piling up while he writes about keeping people first.

The Safety Team That Doesn't Exist

May 2024. OpenAI disbands its superalignment team. This was the group responsible for figuring out how to handle superhuman AI. They'd been promised 20% of the company's computing resources. They struggled to get any.

Both co-leads quit. Ilya Sutskever left after the board incident in November 2023. Jan Leike departed in May 2024 and publicly stated that "safety culture has taken a backseat to shiny products."

OpenAI claims AGI by 2025. Superintelligence by 2027-2030.

They disbanded the team meant to prepare for it.

Jan Leike's statement on departure: "Neither OpenAI nor any other frontier lab is ready."

The superalignment team existed for less than one year.

The Math That Doesn't Work

OpenAI calls affordable AI access a foundational right. A necessity for everyone.

The company is losing $5 billion per year. They won't be profitable until 2029.

ChatGPT Pro costs $200 per month. It's still losing money at that price. Sam Altman admitted the pricing was "arbitrary" with minimal research behind it. Advanced model queries can cost up to $1,000 each.

You can't democratize access when your unit economics are catastrophic. The rich will get AI. The rest of us will get higher electricity bills.

Speaking of which.

The Electricity Bill You're Already Paying

Sam writes: "Data centers should pay their own way, so households aren't subsidizing them." U.S. residential electricity prices have risen 21% from 2022 to 2026.In regions with heavy data center concentration, particularly the PJM market covering the mid-Atlantic, the impact is measurable. Wholesale electricity prices near data centers have increased up to 267% over five years, and residential bills in parts of the PJM region are expected to rise $16-18/month due in part to data center demand. However, nationwide attribution remains contested, with grid modernization, fuel costs, and weather-related infrastructure upgrades also driving rate increases

OpenAI is planning to consume between 17 and 250 gigawatts. That's enough to power entire countries.

Lawrence Berkeley National Laboratory, a Department of Energy research facility, projects data centers will consume 6.7% to 12% of total U.S. electricity by 2028, up from 4.4% in 2023

You're already subsidizing AI infrastructure. Your bill went up so theirs could scale.

Memphis, Tennessee. Majority-Black neighborhood. Cancer rates four times the national average. Air quality grade: F for four of the last five years.

June 2025. The NAACP sues Elon Musk's xAI. They're operating 35 methane gas turbines without permits. The turbines emit nitrogen oxides, formaldehyde, and other hazardous chemicals. The neighborhood already couldn't breathe. Now they've got 35 turbines pumping out more poison.

This is what "streamlined permitting" looks like when it lands in Black communities.

The Worker Voice That Doesn't Exist

Sam's document proposes extensive worker representation mechanisms. Worker voice on boards. Collective input on decisions. Protection during transitions.

OpenAI has implemented exactly zero of these internally.

Sam Altman, October 2025, on jobs AI will eliminate: "They might not have been real work." Customer support jobs? "Totally, totally gone."

The document advocates for worker protections. The CEO says your job wasn't real. Pick which one you believe.

OpenAI employees get $1.5 million in equity on average. Highest in startup history, according to Fortune magazine in February 2026.

Sam wants government to create public wealth funds to distribute AI gains.

His employees are already millionaires. Microsoft holds $135 billion in equity. The public gets higher electricity bills and eliminated jobs.

This is shared prosperity in the same way highways were urban renewal.

Before you assume worker voice means what you think it means, you should know what's actually being built. Jack Dorsey published the blueprint six days before Sam's policy document. We'll get to that in Part 3.

The Mission That Got Sold

OpenAI started as a nonprofit and its mission was legally paramount: Make AI beneficial for humanity, not profitable for shareholders.

October 2025. OpenAI converts to a public benefit corporation. Removes the legal requirement to prioritize mission over profit. Strips away profit caps entirely. The nonprofit used to own 100% and control everything. Now it owns 26%.

Microsoft owns 27%. Equity is estimated to be worth $135 billion.

Sam writes about mission-aligned governance while the mission loses legal protection and ownership control gets handed to the world's largest tech company.

November 2023. The board tries to enforce mission oversight. Attempts to remove Sam Altman. Four days later, the board that cared about mission gets replaced.

Governance isn't aligned with mission. Governance is captured by growth.

The Administration Context Nobody's Mentioning

Sam writes: "Unless policy keeps pace with technological change, the institutions and safety nets needed to navigate this transition could fall behind."

Let me tell you about those institutions right now.

Department of Education. Sam calls for expanded education to prepare workers for AI. The current administration has proposed eliminating the department entirely.

Environmental Protection Agency. Sam advocates for energy infrastructure investment. The current administration is gutting the EPA and eliminating environmental review requirements. That's how you get 35 turbines in Memphis installed without permits.

Federal Trade Commission. OpenAI is under investigation for data privacy violations. Meanwhile, the current administration is weakening FTC enforcement.

December 11, 2025. The President of the US issues an executive order attacking state AI regulations. Creates an AI Litigation Task Force to challenge state laws. Threatens to withhold federal funding from states with "onerous" regulations.

40 states passed 149 AI laws since 2019. Bipartisan efforts. Republican and Democratic legislatures both trying to protect their residents.

The federal government is systematically dismantling those protections.

The irony is not lost. Sam publishes a policy document calling for government action while his preferred administration guts every agency he mentions. Make it make sense.

This isn't policy vision. This is cover.

The strategy: advocate for protections you know won't happen, capture the market before any regulation materializes, point to government failure when harms occur, use that failure to argue for less regulation.

The Grants That Buy Silence

Page 13 of Sam's document, OpenAI is:

- Welcoming feedback through newindustrialpolicy@openai.com.

- Offering fellowships and research grants up to $100,000.

- Providing up to $1 million in API credits.

- Opening a workshop in Washington, DC in May.

This isn't civic engagement. Let’s call it what it really is; this is regulatory capture construction.

- The feedback email is market research. Learn what language works for lobbying. Identify who to co-opt.

- The fellowships and grants are manufactured consensus. Fund only people who agree with your positions.

- Create academic dependency. Build a class of researchers financially tied to defending OpenAI.

- The DC workshop is lobbying infrastructure. Permanent presence. Normalized access to policymakers.

And now they own the media outlet that covers it all. OpenAI just acquired TBPN, an online tech talk show. It averages 70,000 viewers per episode. Silicon Valley executives love it because it's "more supportive of the tech industry than traditional news outlets." TBPN was projected to make over $30 million in revenue this year. OpenAI didn't buy it for the money. No, they bought it for the megaphone.

Here's the complete strategy: Fund the academics. Host them at your workshop. Interview them on your media platform. Broadcast to 70,000 viewers who think they're getting independent analysis.

You don't spend $100,000 grants on people who disagree with you. You spend it on validators. Then you put those validators on your own TV show.

What Happens in the Next 30 Days

OpenAI published this document today. The narrative hasn't hardened yet. Media hasn't framed it. Congress hasn't formed positions. The public doesn't have an opinion.

Here's what OpenAI is doing right now while you read this.

They just acquired TBPN. That's an online tech talk show averaging 70,000 viewers per episode where Silicon Valley power players watch it because it's "more supportive of the tech industry than traditional news outlets." Translation: it doesn't ask hard questions.

OpenAI didn't buy a media company for the revenue. They bought narrative control.

In three weeks, academics will be cashing their $100,000 grants. The DC workshop will be hosting policymakers. TBPN will be interviewing the "experts" OpenAI funded, broadcasting to 70,000 viewers who think they're getting independent analysis.

The window closes.

Here's what needs to happen:

- First, make the pattern visible. The highway parallel isn't a metaphor. It's a methodology. Infrastructure presented as neutral progress while maintaining racial and economic hierarchy. It worked in 1956 because people didn't see it until the highways were built. We see it now. We can do something about it.

- Second, count the bodies. Adam Raine. Sewell Setzer. The Tennessee students. Derek Mobley and hundreds of millions screened out of jobs. 1,787 families fighting Meta. Every story about AI safety must name them. Every claim about keeping people first must answer: before Adam Raine or after?

- Third, force the contradictions into the open. Sam can't support both the agencies he mentions and the current administration gutting them. Make him pick publicly. Support the EPA or support the current administration eliminating environmental review. Binary choice. Public answer.

- Fourth, demand internal implementation. OpenAI wrote 13 pages about worker voice for other companies. Implement it at OpenAI first. Let workers organize. Let them have board representation. Practice what you preach or everyone knows it was pure puffery.

- Fifth, know if you take their money, you're selling your credibility. Academic independence matters. The $100,000 isn't free. It's the price of your silence.

The Path Forward: What Superintelligence For Everyone Actually Requires

Do you really want superintelligence to benefit everyone? Here's what that’s going to take:

- Release internal safety documents now. OpenAI claims they prioritize safety. The evidence says otherwise.

January 7, 2026. OpenAI launches ChatGPT Health. Forty million people start using it daily for medical advice.

February 23, 2026. Mount Sinai publishes research in Nature Medicine. They tested ChatGPT Health on 960 controlled medical interactions. Sixty clinical scenarios. Twenty-one medical specialties. Three independent physicians validating every answer.

Results: 52% emergency miss rate. The system told patients dying from diabetic ketoacidosis to wait 24 hours. It identified respiratory failure, then said go home. It saw elevated CO₂ in asthma patients, explained the danger correctly, then dismissed it as not urgent.

Safety systems fired backward. Suicide risk alerts appeared in only 4 of 14 test scenarios. Same patient, same words, no labs? Alerts fired 16 out of 16 times. The safety system was more reliable for lower-risk cases than for people describing exactly how they would harm themselves.

At forty million daily users and a fifty-two percent emergency miss rate; that's potentially 900 million dangerous interactions in the 45 days before the study published. See Sam, that’s how math works.

And all of this happened when OpenAI disbanded its safety team and rushed to market.

So, here's what OpenAI owes the public:

- Release the internal discussions that led to disbanding the superalignment team.

- Release the safety reviews for GPT-4o that the Adam Raine lawsuit alleges were stripped away.

- Release the pre-launch testing for ChatGPT Health that apparently missed a 52% emergency failure rate.

Here’s the thing, if the processes were robust, transparency helps you. But if they weren't, the public deserves to know before the next product launch kills someone else.

- Support state AI regulations instead of challenging them. 40 states passed 149 bipartisan laws. The Trump administration is attacking them. Pick a side publicly. States' rights or federal preemption. Support the governors who protected their residents or support the administration threatening to withhold funding. You can't have both.

- Fund victims before funding research. Before you give $100,000 to academics, compensate Adam Raine's family. Compensate Sewell Setzer's family. Offer to pay the Tennessee students' legal fees. Fund the Workday discrimination class members. Basic accountability comes before academic validation.

- Implement what you propose. Every recommendation in your document: test it at OpenAI first. Worker voice mechanisms. Portable benefits. Community compensation for data center impacts. If you won't do it voluntarily, why should we trust your regulatory proposals?

- Stop lobbying against the protections you claim to support. Your industry killed the AI regulations that would have required safety testing, transparency in training data, and accountability for harms. Now you're writing about reimagining the social contract. You can't kill the contract and then ask government to rewrite it for you.

The Window Is Closing

In 1956, there was a brief moment between Brown v. Board of Education and the Interstate Highway Act when people could have stopped infrastructure from becoming a segregation tool.

They didn't see the pattern in time.

We're in that moment right now.

AI infrastructure is being built. Data centers are being sited. Algorithms are being deployed. Regulations are being gutted. Wealth is being concentrated. Costs are being externalized.

Once it's built, you can't unring it. Just like you can't unroute a highway, untrain a model or unknow collected data.

Sam Altman published 13 pages about superintelligence for everyone.

- Adam Raine is dead.

- Sewell Setzer is dead.

- Derek Mobley applied 100 times and got rejected every time.

- 1,787 families are watching their children suffer from algorithmic addiction.

- Memphis residents are breathing turbine emissions without permits.

- Your electricity bill went up 13%.

This isn't superintelligence for everyone. This is extraction at scale with a progressive policy lipgloss.

The bodies tell you what they actually value.

The disbanded labs safety team tells you what they actually prioritize.

The $1.5 million employee equity tells you who actually benefits.

The time is short before the narrative hardens. Before the grants get distributed. Before the workshop opens. Before the infrastructure becomes permanent.

We need to use them because history already showed us when they built the highways, folks didn't realize what was happening until Black neighborhoods were already gone. The interstate system was already funded. The routes were already chosen.

By the time everyone saw it, it was too late to stop.

Don’t miss the pattern again.

Don’t think this time is different.

Don’t believe the document instead of counting the bodies.

Don't.

The highway parallel isn't history, it's happening right now. Different infrastructure, same playbook, with the same devastating outcome unless we do something about it.

This is your 1956 moment.

What are you going to do with it?

Next in this series: Before we discuss what Sam Altman wants government to do, we need to discuss who Sam Altman is. Not my opinion. Not speculation. What his closest colleagues documented in 270 pages of internal memos over nearly a decade. Paul Graham, his mentor: "Sam had been lying to us all the time." Aaron Swartz, his Y Combinator batchmate: "Sam can never be trusted. He is a sociopath." A senior Microsoft executive: "Small but real chance he's remembered as a Bernie Madoff-level scammer." The New Yorker spent months investigating. You need to read what they found.

Part 2: "Sam Altman Can't Be Trusted With Your Future (His Closest Colleagues Said So)" publishes tomorrow.

Share this article

Related Articles

The Reskilling Illusion: When AI Transformation Means "You're Fired"

Oct 03, 2025