Six Minutes to AI Readiness? Sure, And I'm the Tooth Fairy

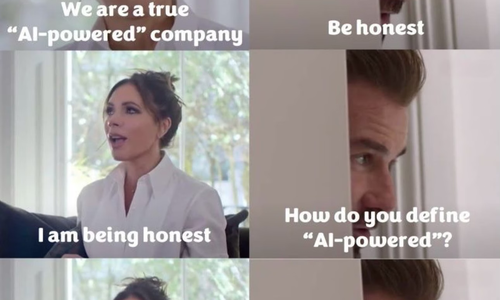

There are two kinds of people in the tech world. Those who believe you can gauge AI readiness in six minutes, and those who understand the world in which they actually live and operate.

Apolitical launched their "AI Readiness Check" (ARC) for public servants. Twenty yes-or-no questions. Six minutes of your time. Individualized feedback. And voilà! You'll know exactly where to "prioritize your leadership" in AI adoption.

The United States Conference of Mayors, partnering with Google, just published their "Mayors AI Playbook”; practical guidance, real-world examples, tips on measuring success. Select your first AI project! Deploy AI strategies! Govern your data!

This would be funny if communities across America weren't drowning in the consequences of exactly this kind of feel-good theater.

This is for the people all the way in back – THESE ARE NOT EDUCATIONAL RESOURCES. They're permission slips for fiscal malpractice, wrapped in the veneer of innovation and distributed without a single question about whether anyone receiving them has achieved the most basic digital competence.

The Quiz That Thinks It's a Strategy

Let me save you six minutes. The ARC asks public servants to evaluate themselves across four dimensions: ethical AI use, AI innovation, operational applications, and workforce readiness. You tick some boxes, get your score, and presumably feel great about your organization's "AI maturity."

But Apolitical isn't alone in this delusion. The United States Conference of Mayors partnered with Google to create a "Mayors AI Playbook" that gleefully touts all the amazing outcomes you can derive from AI and will help you select your first AI project. Real-world examples from peers. Practical guidance on AI and data governance. Tips on measuring success. Not one single solitary inkling of a concept of an idea that maybe, just maybe, you're not ready.

Let me give you an example. The City of Hackensack, New Jersey. County seat of Bergen County, the most population-dense suburban county in the entire country. Nearly one million people in 234 square miles. The kind of place that should have digital infrastructure figured out.

Building department: zero services online.

Zoning department: zero services online.

Tax assessor: zero services online.

Health department: zero services online.

Police department: zero services online.

City Council: zero services online.

They have a "portal" for the county court, but good luck figuring out what it actually does.

But sure, let's assess your AI readiness. Let's help you select your first AI project. Let's have you evaluate whether your organization has "developed role-specific guidance for how individuals can use AI." Let's discuss your AI innovation roadmap and your agentic AI governance framework.

You can't put a building permit application online, but we're going to help you assess whether you're ready for machine learning models and algorithmic decision-making. This is the equivalent of handing someone who's never driven a car the keys to a fighter jet and a checklist asking "Do you understand advanced aeronautical navigation systems?"

When these are pointed out, the response will be predictable from the purveyors of the slop: "Many jurisdictions are much more advanced."

Who cares? They didn't limit the dissemination of these documents. They're handing AI playbooks and readiness assessments to every municipality in America, regardless of whether they've achieved the most basic digital transformation. They're telling cities that can't even process a permit application online that they're ready to evaluate AI ethics frameworks and govern their agentic AI deployment.

Let me repeat: the Apolitical assessment and the Mayors AI Playbook aren't educational resources. They're enablers of catastrophic risk to communities, distributed without guardrails to governments that shouldn't be anywhere near AI until they master the basics of putting forms on the internet.

Here's what these assessments and playbooks actually don't ask:

"What's your plan when 60% of your AI projects fail to deliver ROI?"

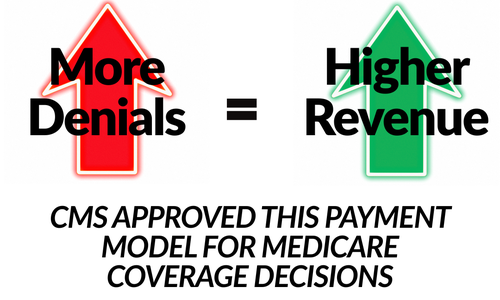

The checklist gleefully encourages you to "select your first AI project." It doesn’t mention that the vast majority of AI investments have become abject failures. It does not address that companies are realizing the folly of replacing humans with AI.

Klarna reversed course after its CEO admitted the AI-focused strategy resulted in "lower quality" output and the company is now hiring human customer service agents again after a year-long hiring freeze.

Salesforce reduced its support workforce from 9,000 to 5,000 employees, with senior leaders later acknowledging the company was "too confident" in AI's ability to replace human judgment, resulting in declining service quality and higher complaint volumes.

Microsoft is mandating AI use for workers while putting "the onus on employees to learn AI tools" instead of providing training.

Only 39% of workers globally using AI have received company training. Only 25% of companies plan AI training this year. But Apolitical wants you to assess whether your city "provides access to free AI training for all staff" as if checking that box solves systemic workforce abandonment.

The Privilege of Checking Boxes

I've spent 3+ decades in technology. I led one of the largest cloud migrations in AWS history. I directed engineering teams for the NFL's Digital Athlete platform. My partners and I have audited AI systems for hundreds of organizations. We’ve advised US Congressional leaders on AI ethics policy. We created the ETHICALENS framework that's becoming the standard for ethical AI development. I've personally experienced algorithmic bias, AI systems that looked at "Cloud Jedi" and "tech CEO" and decided it couldn't possibly be a Black woman, literally replacing my face with Jensen Huang's.

So, trust me when I say this: No six-minute quiz is going to tell you if you're ready for AI.

What Apolitical Won't Tell You

The same pattern repeats in the Mayors AI Playbook. Does it mention the abject failure the vast majority of AI investments have become? No. Does it address the very real issues surrounding the impending disaster of building data centers that drive up utility costs for ordinary citizens? Also no. Or maybe it addresses the incredible water shortages predicted because of AI data centers, disproportionately impacting communities already facing water scarcity? Of course not.

These documents assume you're ready. They assume you have the infrastructure, the expertise, the governance structures, the community engagement processes. They skip straight past fundamentals and land you in a world where you're supposed to be selecting AI projects and measuring algorithmic outcomes when you can't even digitize a building permit.

And while public servants work through these assessments and playbooks, convinced they're doing something meaningful, real people are organizing protests in front of courthouses. In Amarillo, residents are fighting a proposed 5,800-acre data center campus that would consume 2.5 to 10 million gallons of water daily. In Hermantown, Minnesota, citizens discovered that state, county, city, and utility officials had known about a proposed data center, several times larger than the Mall of America, for an entire year before the public learned about it through a records request. "The secrecy just drives people crazy," said one resident.

In South Memphis, residents describe a rotten egg-like stench in the air and worsening impacts on their heart and lung health from xAI's turbines. LaTricea Adams, CEO of Young, Gifted & Green, calls living in the ZIP code "a death sentence for Black Memphians" and "a clear act of genocide." Memphis Community Against Pollution and local students pack public hearings opposing the project, with hundreds of comments opposing xAI's air permit.

Communities are forming grassroots movements, door-knocking, protesting, begging their elected leaders not to approve these disastrous building plans. They're trying to save the towns and cities they live in from becoming sacrifice zones for tech's AI ambitions. On the other hand, you have organizations labeling themselves "apolitical" giving air cover to leaders who engage in fiscal malpractice through fun online quizzes.

The Questions That Actually Matter

Want to know if your organization is ready for AI? Ask these instead:

What specific problem will this AI system solve that cannot be solved more effectively through other means?

If you can't answer this with painful specificity, you're not deploying AI. You're checking a box because everyone else is checking boxes.

Who gets harmed if this system makes a mistake, and do those people have any recourse?

The hiring AI that "improves efficiency" screens out your best talent based on zip codes and names. The medical AI that "improves diagnoses" misses life-threatening conditions in women and people of color. The loan algorithm that "reduces bias" digitizes decades of discrimination at computational speed.

Who are you going to sue when something goes sideways? There's no person to speak to. It's all AI. I see it every day, people stuck in "LinkedIn jail" with no human to appeal to. That's your future at scale if you don't ask this question first.

What is the true total cost of ownership, including infrastructure, water, electricity, community impact, and potential litigation?

Notice how data center companies and their lobbyists fight transparency requirements. The Data Center Coalition opposed California's water disclosure bill. An Oregon city sued a newspaper to prevent it from reporting on Google's water use. When the case finally settled, it revealed Google's data centers consumed more than a quarter of local water. If the economics only work when you hide the numbers, the economics don't work.

Have you mapped every assumption baked into your training data, and tested whether those assumptions perpetuate historical inequities?

I've seen AI systems that assumed chest pain in heart attacks looked different based on skin color. I've seen hiring algorithms that rejected qualified candidates because their names "didn't fit company culture." I've seen loan systems that flagged entire neighborhoods as "high risk" based on digital redlining.

These weren't bugs. They were features built from biased data that everyone assumed was neutral because it was historical.

No One Is Coming

Here's the uncomfortable truth: Nobody else is coming to save us. Most “elected” officials are woefully ill-equipped to address these issues. They're taking six-minute quizzes and reading playbooks that skip straight past "do you have any online services at all" and jump directly to "how are you governing your agentic AI?"

Organizations like Apolitical and the US Conference of Mayors, partnering with tech giants like Google, see dollar signs and photo-op opportunities. They're laser-focused on adoption and implementation rather than basic digital competence, let alone ethics or sound judgment. They parade resources in front of governmental leaders encouraging them to "select their first AI project" when their constituents can't renew a permit online.

Consulting firms and platforms make the problem worse while profiting from the chaos. They hand out playbooks to every municipality regardless of readiness, creating air cover for leaders who engage in fiscal malpractice.

AI trust is at an all-time low. Tens of thousands of employees have been laid off. Even big tech companies are restructuring and attempting to leverage AI instead of humans, then scrambling to reverse course when reality hits.

What is happening right now will have repercussions felt for years, if not decades. To call it a hot mess would be an understatement.

AI Can Be Powerful. This Isn't How.

Let me be clear: AI and machine learning can be incredibly powerful tools and force multipliers when deployed as part of a carefully planned roadmap that starts with a specific problem to solve.

The question should always be: "What problem will this solve?"

Right now, everyone is asking: "How can I cash in on this before it's too late?"

And that's the AI bubble in action.

Memory chip manufacturers are cannibalizing their production lines to squeeze profits from the "AI space" while the getting is good. Platforms are parading playbooks in front of mayors, promising amazing outcomes without mentioning the disasters happening in real time across America.

If every LLM vanished tomorrow, the human race would be just fine. Honestly, in some ways, we'd be better off given how completely we've broken our collective brain over when and how to use these tools.

There Are No Shortcuts

You want to deploy AI in government? Do the work. Do it right.

Start with the hardest questions, not the easiest checkboxes. Before you take a single quiz or open a single playbook, answer this: Can your constituents renew a permit online? File taxes online? Access health department records online? Schedule a building inspection online?

No? Then you're not ready for an AI readiness assessment. You're not ready for an AI playbook. You're not ready to select your first AI project.

Take a hard look at who's building the six-minute quizzes meant to assess AI readiness for governments worldwide. A review of Apolitical's publicly available team information reveals a concerning pattern: while their advisory board includes accomplished individuals, there appear to be only three Black men (all from Africa) and no people of color in executive or senior leadership positions.

When you're creating frameworks to assess AI readiness, technology that will make decisions affecting millions of people across hundreds of zip codes, cultures, and communities, who's in the room matters profoundly.

Who's not included? Who's not in the room? Who's not here? These questions should be non-negotiable. Yet no one seems to be asking them. Because if your training data only includes certain zip codes, certain demographics, certain voices, your AI will only see those people as valid, as real, as worthy of accurate recognition and fair treatment.

If your datasets are built by people who have never lived in South Memphis, who have never had their tap water turn brown, who have never seen their electricity bills triple, who have never breathed air poisoned by unpermitted turbines, then your AI will replicate that blindness at scale.

Who's not included in your data? Who's not in the room when you're building these systems? Who's not here? These aren't rhetorical questions. They're the difference between AI that serves communities and AI that destroys them. The difference between algorithmic tools that expand opportunity and algorithmic weapons that calcify existing inequities at computational speed. Because here's what happens when you skip this step: Your hiring AI rejects qualified candidates because their names "don't fit the company culture." Your medical AI misses heart attacks in Black patients because your training data assumed chest pain presents the same way across all skin tones. Your loan algorithm digitizes redlining by flagging entire neighborhoods as "high risk" based on historical data that reflects decades of discrimination, not actual creditworthiness. And when communities organize to stop xAI from poisoning their air, when residents in Newton County watch their water turn brown, when electricity bills in data center hubs jump 267%, the people who created the playbooks and the quizzes are nowhere to be found. They're on to the next innovation, the next framework, the next partnership with tech giants – chasing the next cash cow.

So, before you take that quiz, before you download that playbook, before you assess your AI readiness:

- Engage your community before signing NDAs with tech consultants. Not after. Before. The people who will breathe the emissions, drink the water, pay the utility bills, they get a voice first, not last.

- Calculate true costs, including the ones that show up on your constituents' front doors, utility bills, and in their drinking water. Not just your IT budget. The complete cost to your community.

- Test for bias in your training data by asking who's missing. If your datasets only include certain zip codes, certain demographics, certain voices; your AI will only recognize those people as real. Everyone else becomes invisible, or worse, tagged as anomalous, risky, wrong.

- Build in accountability mechanisms that involve actual humans. Real people who can be reached, who have names, who can be held responsible when something goes wrong. Not a chatbot. Not an automated system. Humans.

- Understand that AI deployed in a predominantly Black neighborhood without permits, without public notice, without community input is not innovation. It's environmental racism at computational speed. It's digital colonization. It's the same extractive playbook that's been running for centuries, now automated and optimized for scale.

If your objective is that "the line must go up," you are most likely actively harming communities across the country, and indeed the world.

And if you think you can assess your readiness for all of that in six minutes with twenty yes-or-no questions, or by reading a playbook that never asks about your town’s basic digital literacy, you're not ready. You're not even close.

The code is watching. And unlike those quizzes and playbooks, the code keeps receipts.

Share this article

Related Articles

The Reskilling Illusion: When AI Transformation Means "You're Fired"

Oct 03, 2025