Sam Altman Can't Be Trusted With Your Future (His Closest Colleagues Said So)

In Part 1, we showed you the pattern. AI infrastructure as the new tool of segregation. The bodies piling up while Sam Altman writes about keeping people first.

Today we talk about trust.

Not whether I trust Sam Altman or whether you trust him, but whether the people who worked closest with him for nearly a decade trust him.

Spoiler: They don't. And they documented why in 270 pages of internal memos, notes, and evidence.

The New Yorker spent months investigating. Published April 6, 2026. The same day OpenAI released their industrial policy vision. You're supposed to think the timing is coincidence.

Before we discuss what Sam wants the government to do, let's discuss who Sam is. Based on what his mentors, his board, his employees, and his closest colleagues said when they thought no one was watching.

Note: Unless otherwise indicated, all quotes and details in this article are sourced from Ronan Farrow and Andrew Marantz's investigation published in The New Yorker on April 6, 2026.

Paul Graham: "Sam Had Been Lying to Us All the Time"

Paul Graham made Sam Altman. Recruited him to run Y Combinator at 28. Called him unstoppable. Put him on lists of top founders before he'd earned it. Explanation: "Sam Altman can't be stopped by such flimsy rules."

When Sam was 23, Graham wrote: "You could parachute him into an island full of cannibals and come back in 5 years and he'd be the king." This was based on will to prevail, not track record. Sam's startup Loopt had been acquired largely to help him save face, according to sources familiar with the deal.

By 2018, several Y Combinator partners were so frustrated with Sam's behavior they approached Graham to complain. Graham and his wife Jessica Livingston apparently had a frank conversation with Sam.

Afterward, Graham started telling people Sam had agreed to leave but was resisting in practice. Sam told some partners he would resign as president but become chairman instead.

In May 2019, a blog post announced Y.C. had a new president with an asterisk: "Sam is transitioning to Chairman of YC." Months later, edited to read "Sam Altman stepped away from any formal position at YC." Then the phrase was removed entirely.

As recently as 2021, an SEC filing listed Sam as Y Combinator chairman. Sam says he wasn't aware until much later. Sam maintains he was never fired from Y.C. Graham has tweeted "we didn't want him to leave, just to choose" between Y.C. and OpenAI.

But in private conversations with Y.C. colleagues, Graham was unambiguous. According to multiple Y.C. founders and partners who spoke to The New Yorker: Paul Graham told colleagues that prior to Sam's removal, "Sam had been lying to us all the time."

The Loopt Pattern: Where It Started

Most of Sam's employees at Loopt liked him. But some were struck by his tendency to exaggerate, even about trivial things. One recalled Sam bragging widely that he was a champion ping-pong player. "Like, Missouri high-school ping-pong champ." Then proving to be one of the worst players in the office. Sam says he was probably joking.

Mark Jacobstein, an older Loopt employee asked by investors to act as Sam's "babysitter," told journalist Keach Hagey: "There's a blurring between 'I think I can maybe accomplish this thing' and 'I have already accomplished this thing' that in its most toxic form leads to Theranos."

Groups of senior employees, concerned with Sam's leadership and lack of transparency, asked Loopt's board on two occasions to fire him as CEO.

A board member responded: "This is Sam's company, get back to work." The pattern was visible early. The protection was there early too.

Ilya Sutskever: "I Don't Think Sam Should Have His Finger on the Button"

Ilya Sutskever was OpenAI's chief scientist. He officiated Greg Brockman's wedding in 2019. The ceremony was at OpenAI's offices. The ring bearer was a robotic hand. These were friends.

By fall 2023, Ilya had changed his mind. As he came to believe OpenAI was nearing artificial general intelligence, his doubts about Sam increased. As Ilya put it to another board member: "I don't think Sam is the guy who should have his finger on the button." At the behest of fellow board members, Ilya worked with like-minded colleagues to compile some 70 pages of Slack messages and HR documents, accompanied by explanatory text.

The material included images taken with a cellphone, apparently to avoid detection on company devices. He sent the final memos to other board members as disappearing messages, to ensure no one else would ever see them. "He was terrified," a board member who received them recalled. The memos have not previously been disclosed in full. The New Yorker reviewed them. They allege Sam misrepresented facts to executives and board members and deceived them about internal safety protocols.

One memo about Sam begins with a list headed "Sam exhibits a consistent pattern of..."

The first item: "Lying." Board member Helen Toner, an AI policy expert, and Tasha McCauley, an entrepreneur, received the memos as confirmation of what they already believed. Sam's role entrusted him with the future of humanity. But he could not be trusted.

The Firing That Failed

November 2023. Sam was in Las Vegas attending a Formula 1 race. Ilya invited him to a video call with the board. Read a brief statement. Sam was no longer an employee of OpenAI.

The board released a public message: Sam had been removed because he "was not consistently candid in his communications."

Microsoft, which had invested $13 billion in OpenAI, learned of the plan to fire Sam moments before it happened. Satya Nadella, Microsoft's CEO: "I was very stunned. I couldn't get anything out of anybody."

Reid Hoffman, OpenAI investor and Microsoft board member: "I didn't know what the fuck was going on. We were looking for embezzlement, or sexual harassment, and I just found nothing."

The investor Ron Conway was having lunch with Nancy Pelosi when Sam called. "You better get out of here really quick," Pelosi told Conway.

Sam flew back to his $27 million San Francisco mansion. Set up what he called a "sort of government-in-exile." Conway, Airbnb co-founder Brian Chesky, and crisis communications manager Chris Lehane joined for hours daily by video and phone. Some of Sam's executive team camped out in the hallways. Lawyers set up in a home office next to his bedroom. Sam interrupted the war room at 6 PM each evening with Negronis. "You need to chill. Whatever's gonna happen is gonna happen."

His phone records show he was on calls more than 12 hours a day. Within hours of the firing, Thrive had put its planned investment on hold. The deal would only be consummated if Sam returned. Microsoft announced it would create a competing initiative for Sam and any employees who left OpenAI.

A public letter demanding Sam's return circulated at the organization. Some people who hesitated to sign received imploring calls and messages from colleagues. A majority of OpenAI employees ultimately threatened to leave with Sam. The board was backed into a corner. Even Mira Murati, who had given Ilya material for his memos and was serving as interim CEO, eventually signed the letter.

Ilya later explained in a court deposition: "I felt that if we were to go down the path where Sam would not return, then OpenAI would be destroyed." Less than five days after his firing, Sam was reinstated. Ilya, Helen Toner, and Tasha McCauley lost their board seats. As a condition of their exit, they demanded the allegations against Sam be investigated. They also pressed for a new board that could oversee the inquiry with independence. But the two new members, former Harvard president Lawrence Summers and former Facebook CTO Bret Taylor, were selected after close conversations with Sam.

Sam texted Satya Nadella: "would you do this. bret, larry summers, adam as the board and me as ceo and then bret handles the investigation." McCauley later testified in a deposition that when Taylor was previously considered for a board seat, she'd had concerns about his deference to Sam. Employees now call this moment "the Blip." The debate over Sam's trustworthiness moved beyond OpenAI's boardroom.

Dario Amodei: 200 Pages of "His Words Were Almost Certainly Bullshit"

Dario Amodei was a biophysicist and font of frenetic energy. He joined OpenAI early. Led the safety team. Like many AI researchers, Dario believed the technology should only be built if shown to be "aligned" with human values. Meaning it would act in accordance with what people wanted without making a potentially fatal error. Sam was reassuring. Mirrored these safety concerns.

Dario took detailed notes on Sam and Greg Brockman's behavior for years. Heading: "My Experience with OpenAI." Subheading: "Private: Do Not Share." A collection of more than 200 pages of documents related to Dario, including those notes and internal emails and memos, has been circulated by colleagues in Silicon Valley but never before disclosed publicly.

In his notes, Dario wrote that Sam's goal was to build "an AI lab that would be focused on safety ('maybe not right away, but as soon as it can be')."

By 2018, Dario had started questioning the founders' motives more openly. "Everything was a rotating set of schemes to raise money," he later wrote. "I felt like what OpenAI needed was a clear statement of what it would do, what it would not do, and how its existence would make the world better." In early 2018, Dario drafted a charter for the company. He advocated for its most radical clause: if a "value-aligned, safety-conscious project" came close to building AGI before OpenAI did, the company would "stop competing with and start assisting this project."

The "merge and assist" clause. If Google figured out how to build safe AGI first, then OpenAI could wind itself down and donate its resources to Google.

By any normal corporate logic, this was insane. But OpenAI was not supposed to be a normal company. In spring 2019, OpenAI was negotiating a billion-dollar investment from Microsoft. Dario, who led the safety team, had helped pitch the deal to Bill Gates. Many people on the team were anxious about it, fearing Microsoft would insert provisions that overrode OpenAI's ethical commitments.

Dario presented Sam with a ranked list of safety demands. Preservation of the merge-and-assist clause at the very top. Sam agreed to that demand. But in June, as the deal was closing, Dario discovered that a provision granting Microsoft the power to block OpenAI from any mergers had been added. "Eighty percent of the charter was just betrayed," Dario recalled. He confronted Sam, who denied the provision existed.

Dario read it aloud, pointing to the text. Ultimately forced another colleague to confirm its existence to Sam directly. Sam doesn't remember this. Dario's notes describe escalating tense encounters. Months later, Sam summoned Dario and his sister Daniela (who worked in safety and policy at OpenAI) to tell them he had it on "good authority" from a senior executive that they had been plotting a coup. Daniela "lost it," the notes continue. Brought in that executive, who denied having said anything. As one person briefed on the exchange recalled, Sam then denied having made the claim. "I didn't even say that." "You just said that," Daniela replied.

Sam said this was not quite his recollection, and that he had accused the Amodeis only of "political behavior." In 2020, Dario, Daniela, and other colleagues left to found Anthropic. It's now one of OpenAI's chief rivals.

His language in the notes is heated. At times incensed: "His words were almost certainly bullshit." His conclusion: "The problem with OpenAI is Sam himself."

Aaron Swartz: "He Is a Sociopath. He Would Do Anything."

Aaron Swartz was a brilliant but troubled coder. He was in Sam's first Y Combinator batch in 2005. Swartz died by suicide in 2013. He's now remembered in many tech circles as something of a sage. Not long before his death, Swartz expressed concerns about Sam to several friends.

"You need to understand that Sam can never be trusted," he told one. "He is a sociopath. He would do anything."

Multiple people, unprompted, used the word "sociopathic" when speaking to The New Yorker about Sam. A board member: "He's unconstrained by truth. He has two traits that are almost never seen in the same person. The first is a strong desire to please people, to be liked in any given interaction. The second is almost a sociopathic lack of concern for the consequences that may come from deceiving someone." The board member was not the only person who used that word.

Microsoft: "A Small But Real Chance He's Remembered as Bernie Madoff"

Multiple senior executives at Microsoft said that despite Nadella's long-standing loyalty, the company's relationship with Sam has become fraught. "He has misrepresented, distorted, renegotiated, reneged on agreements," one said. Earlier this year, OpenAI reaffirmed Microsoft as the exclusive cloud provider for its "stateless" models. That same day, it announced a $50 billion deal making Amazon the exclusive reseller of its enterprise platform for AI agents.

Microsoft executives argue OpenAI's plan could collide with Microsoft's exclusivity. OpenAI maintains the Amazon deal will not violate the earlier contract. The senior Microsoft executive: "I think there's a small but real chance he's eventually remembered as a Bernie Madoff- or Sam Bankman-Fried-level scammer."

The Investigation That Disappeared

After Sam's reinstatement, the departing board members made their exit conditional on there being an independent inquiry. The two new board members insisted they control that review. OpenAI enlisted WilmerHale, the distinguished law firm responsible for internal investigations of Enron and WorldCom. Six people close to the inquiry alleged it seemed designed to limit transparency. Some said the investigators initially did not contact important figures at the company. An employee reached out to Summers and Taylor to complain. "They were just interested in the narrow range of what happened during the board drama, and not the history of his integrity," the employee recalled. Others were uncomfortable sharing concerns about Sam because they felt there was not sufficient effort to ensure anonymity.

"Everything pointed to the fact that they wanted to find the outcome, which is to acquit him," the employee said. In March 2024, OpenAI announced it would clear Sam but released no report. The company provided, on its website, some 800 words acknowledging a "breakdown in trust." People involved in the investigation said no report was released because none was written. Instead, the findings were limited to oral briefings, shared with Summers and Taylor.

"The review did not conclude that Sam was a George Washington cherry tree of integrity," one of the people close to the inquiry said. But the investigation appears not to have centered the questions of integrity behind Sam's firing, devoting much of its focus to a hunt for clear criminality. On that basis, it concluded Sam could remain as CEO.

Shortly thereafter, Sam, who had been kicked off the board when he was fired, rejoined it.

The decision not to put the report in writing was made in part on the advice of Summers's and Taylor's personal attorneys. When Uber's board investigated Travis Kalanick in 2017, they released a 13-page summary to the public. OpenAI released 800 words acknowledging a "breakdown in trust." No written report. No documentation. No accountability.

Sam said he believed all the board members who joined after his reinstatement received the oral briefings. "That's an absolute, outright lie," a person with direct knowledge of the situation said. Some board members told The New Yorker that ongoing questions about the integrity of the report could prompt "a need for another investigation."

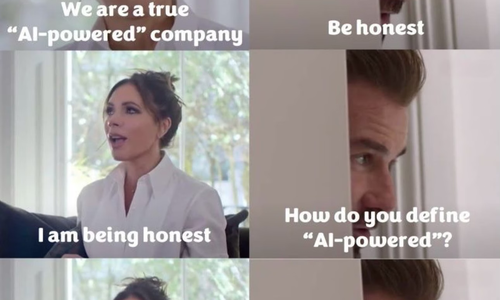

What Does This Mean for the Policy Document

Well, this is the same man who published 13 pages about keeping people first. About worker voice. About public wealth funds. About mission-aligned governance.

The same day The New Yorker published evidence his own board, his own mentor, his own safety team lead, his own employees couldn't trust him. Paul Graham: lying to us all the time. Aaron Swartz: sociopath who would do anything. Ilya Sutskever: shouldn't have his finger on the button. Dario Amodei: the problem is Sam himself. Board member: unconstrained by truth. Microsoft executive: Bernie Madoff-level scammer.

You're supposed to believe the timing is coincidence, you're supposed to trust that this time will be different and you're supposed to ignore the pattern.

The 70-page memo.

The 200-page notes.

The disappeared investigation.

The oral-only briefings.

The board members who never received them.

The Y Combinator firing.

The Loopt attempts.

The Microsoft contracts renegotiated.

The merge-and-assist clause betrayed.

Sam wants YOU to trust him with superintelligence. With AI infrastructure. With public wealth funds. With the future.

I don’t know Sam but his closest colleagues for nearly a decade documented why we shouldn't.

In the final part of our series, we’ll show you what Sam's "worker voice" proposals actually mean when analyzed using Jack Dorsey’s published the blueprint six days before Sam's policy document.

Spoiler: There's no need for a permanent middle management layer. The intelligence layer handles that. You're not getting a voice. You're getting replaced.

And the people building the intelligence layer? They're getting $1.5 million in equity. On average. This isn't about keeping people first. This is about who gets rich while everyone else gets the intelligence layer.

Next in this series: What does "worker voice" actually mean when the company is organized as an intelligence layer instead of a hierarchy? Jack Dorsey and Roelof Botha published "From Hierarchy to Intelligence" on March 31, 2026. Six days before Sam's policy document. Block is normalizing down to three roles. Individual contributors. Directly responsible individuals. Player-coaches. No middle management. The world model handles alignment. The intelligence layer coordinates. You don't need voice mechanisms when there's no management to have voice with. The transition isn't to a better job. The transition is to not having one. Part 3: "What 'Worker Voice' Actually Means (Spoiler: You're Fired)" comes out tomorrow.

Share this article

Related Articles

The Reskilling Illusion: When AI Transformation Means "You're Fired"

Oct 03, 2025