Europe Built Guardrails. America Published a Study Guide. OpenAI Proved Who Was Right.

What Just Happened: The US Department of Labor released its AI Literacy Framework on February 13, 2026. Six days earlier, OpenAI launched ads in ChatGPT with conversation monetization turned on by default. Meanwhile, the EU AI Act has been enforceable law since August 2024, with penalties up to €35 million or 7% of global revenue for violations.

Why You Should Care: If you're building AI systems, buying AI services, or making compliance decisions, you're watching three completely different regulatory philosophies collide in real time. One puts legal obligations on AI providers before deployment. One asks workers to protect themselves after deployment. One turns worker conversations into advertising inventory while both are happening.

The gap between these approaches isn't just a policy difference. It's a fundamental choice about who bears the risk when AI systems cause harm.

The Tale of Three Frameworks

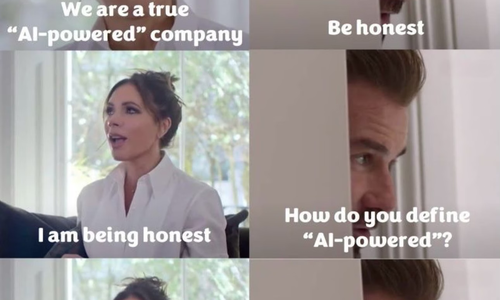

Europe wrote a binding law. America wrote a study guide. OpenAI gave itself permission to monetize your conversations.

Here’s what we mean.

The EU AI Act classifies AI systems by risk and bans the most dangerous applications outright. Social scoring systems. Manipulative AI that exploits vulnerabilities. Certain biometric surveillance. Real-time emotion recognition in workplaces and schools. Eight categories of AI deemed too harmful to allow, period.

For high-risk systems in critical infrastructure, healthcare, employment, law enforcement, and education, providers must prove safety before deployment. Risk assessments. Data governance. Technical documentation. Human oversight. Conformity assessments. All mandatory. All enforceable. All backed by fines that make GDPR penalties look gentle and hurt more than a minor rounding error.

The DOL AI Literacy Framework teaches workers five foundational content areas: understand AI principles, explore AI uses, direct AI effectively, evaluate AI outputs, and use AI responsibly.

Notice what's missing?

Prohibited uses. Provider obligations. Enforcement mechanisms. Workers’ rights to refuse AI systems. Discrimination prevention standards. Penalties for violations.

The framework puts the entire burden of "responsible AI use" on individual workers while placing zero binding obligations on the organizations deploying AI systems.

OpenAI's approach demonstrates why voluntary frameworks fail. On February 9, 2026, they launched ads in ChatGPT with these defaults:

- Ad personalization: ON

- Conversation data collection: ON

- Chat history for targeting: ON

- User responsibility to opt out: Entirely yours

Your conversations are monetized by default. Disabling personalization doesn't stop ads, it just limits which conversations they mine. Want ad-free? Free users get fewer messages. Everyone else pays the $20/month.

The DOL framework says workers should "understand what types of data should not be entered into AI tools and how to prevent accidental disclosure of confidential information."

OpenAI says "we've monetized your conversations by default, good luck remembering to check the settings."

See the problem?

What an Actual AI Governance Framework Looks Like

Blake, Carl and I are ISO 42001 certified Lead Auditors. That's the international standard for AI Management Systems. This is what organizational AI governance actually requires.

ISO 42001 includes:

- Risk management systems with defined acceptance criteria

- Data governance requirements including bias detection

- Technical documentation standards

- Human oversight mechanisms with authority to intervene

- Transparency and accountability controls

- Quality management integration

- 39 specific controls covering everything from AI system inventory to incident management

Every single requirement places obligations on the ORGANIZATION deploying AI systems, not on individual workers using them.

The DOL framework asks workers to:

- Understand AI principles

- Evaluate AI outputs for accuracy

- Protect sensitive information

- Use AI responsibly

- Maintain accountability

ISO 42001 requires organizations to:

- Implement risk management systems

- Establish data governance processes

- Create technical documentation

- Deploy human oversight mechanisms

- Maintain audit trails and evidence

That's not a different approach. That's a complete inversion of accountability.

When an AI system causes harm under ISO 42001, the organization must prove their risk management system, demonstrate what controls were in place, show the audit trail, and correct the management system.

When an AI system causes harm under the DOL framework, someone asks: Did the worker use AI responsibly? Did they evaluate the output properly? Why didn't they protect sensitive information?

One puts liability on systems. The other puts liability on individuals.

The Enforcement Gap Tells You Everything

EU penalties for prohibited AI practices: €35 million or 7% of worldwide annual turnover, whichever is higher.

EU penalties for high-risk system violations: €15 million or 3% of worldwide annual turnover, whichever is higher.

US penalties for anything in this framework: Zero. Voluntary guidance.

This isn't a regulatory philosophy difference. This is choosing not to regulate while calling it innovation policy.

The Timeline Reveals the Strategy

April 2021: EU proposes AI Act

July 2024: EU AI Act becomes law

August 2024: EU AI Act enters force

February 2025: EU prohibitions enforceable, penalties active

February 9, 2026: OpenAI launches ads in ChatGPT

February 13, 2026: DOL releases AI Literacy Framework

We're at least 18 months behind Europe with voluntary guidance while they're enforcing binding law. The US published worker education tips the same week a major AI company demonstrated exactly why voluntary approaches don't work.

That's not leadership. That's performance art.

What Workers Actually Get

Under the EU AI Act:

- Protection from prohibited AI systems

- Right to file complaints with national authorities

- Mandatory human oversight for high-risk AI

- Provider obligations to prove safety before deployment

- Transparency about when AI is being used

- Legal recourse when systems cause harm

Under the DOL AI Literacy Framework:

- Suggestions employers can ignore

- Tips on writing better prompts

- Encouragement to "use AI responsibly"

- No enforcement mechanism whatsoever

- No rights they didn't already have

- Individual responsibility for systemic problems

Under OpenAI's ad model:

- Your conversations become advertising inventory

- Monetization enabled by default

- Manual opt-out required to disable targeting

- Reduced functionality if you want ad-free without paying

- Your work discussions, project brainstorms, and strategy sessions used for ad personalization

- Unless you remember to check the settings

The Questions Nobody's Asking

If AI literacy is the answer, what exactly is the question?

Because it sure isn't "how do we prevent algorithmic bias, discrimination and harm?" We already know that answer: Test for bias before deployment, prohibit harmful uses, require human oversight, enforce with meaningful penalties.

The EU implemented exactly that. It's working. Companies are complying. Workers are protected.

Why does the US framework mention zero prohibited uses when the EU identified eight categories harmful enough to ban entirely?

Why release worker education guidance the same week OpenAI proves voluntary frameworks fail by defaulting to conversation monetization?

Why ignore ISO 42001, the international standard that already defines what organizational AI governance requires, and instead publish tips for individual workers?

The ISO 42001 Reality Check

Organizations looking to ensure EU AI Act compliance through ISO 42001 foundation need:

Management system infrastructure: AI governance committees, defined roles, resource allocation, top management commitment.

Technical implementation: AI system inventory, risk assessment methodology, data governance processes, technical documentation, monitoring procedures.

Evidence and audit: Document control, record retention, internal audit programs, management review, continual improvement.

That's what responsible AI governance actually looks like at minimum. Management systems. Audit trails. Evidence. Controls. Continuous improvement.

The DOL framework suggests we can replace all of that with worker training.

As auditors, we can verify ISO 42001 compliance by examining risk management systems, technical documentation, governance processes, and audit trails.

What can anyone verify with the DOL framework? Training completion rates. Worker knowledge assessments. Policy acknowledgment forms.

One demonstrates organizational commitment through systematic governance. The other demonstrates workers were told to be careful.

What This Means for You

If you're deploying AI systems:

The EU requires proof of safety before deployment.

The US suggests you provide training materials after deployment.

One of these approaches prevents liability. The other documents it.

Your EU competitors are implementing ISO 42001-aligned management systems because it's more than likely becoming a procurement requirement. Your US competitors are... hoping worker literacy solves governance problems.

Guess which position survives regulatory scrutiny?

If you're buying AI services:

Every free ChatGPT conversation your team has about work is now advertising inventory unless someone manually opted out. Project discussions. Client strategies. Competitive analysis. Performance reviews.

The DOL says workers should protect sensitive information.

OpenAI says conversations are monetized by default.

Your information security team is screaming internally.

This is what happens when literacy replaces regulation.

If you're advising on compliance:

Point to the EU AI Act when someone claims comprehensive AI regulation is impossible. It exists. It's enforceable. Companies are implementing it. The US chose not to build it.

ISO 42001 provides the systematic governance framework organizations actually need. The DOL framework provides talking points for why you told workers to be careful.

Which documentation survives the lawsuit?

If you're a worker:

The DOL framework gives you zero new protections. Your employer can deploy any AI system they want. Your job is to get literate enough to work alongside it.

The framework says you should "maintain accountability" for AI outputs while giving you zero authority to refuse AI systems, demand documentation, or require changes.

That's not education. That's liability transfer.

The Credibility Problem Gets Worse

The DOL framework appears in Training and Employment Notice 07-25. It references Executive Order 14277, "Advancing Artificial Intelligence Education for American Youth."

The references section cites America's Talent Strategy and Winning the Race: America's AI Action Plan. Section 2 states that collaborative engagement is "critical to the future of talent development and the reindustrialization of America."

Translation: Speed matters more than safety. Economic competitiveness trumps (no pun intended) worker protection. Deploy AI fast, teach workers to adapt faster.

The EU said "prove your system is safe before deployment."

The US said "deploy whatever you want, we'll teach workers to handle it."

One prevents harm. The other manages casualties.

The Business Model Reality

According to industry analysis, OpenAI charges advertisers approximately $60 per 1,000 impressions with a $200,000 minimum buy-in for beta program participation.

Workers using free ChatGPT are the product, not the customer.

The DOL framework never mentions this business model. Never addresses what happens when the AI tools workers are told to master are optimized for advertiser revenue, not user outcomes.

We're telling workers to get literate while the systems they're learning monetize their attention and conversations.

That's not education policy. That's preparation for extraction.

What Should Have Been Done

The DOL could have issued guidance requiring:

Organizations deploying AI systems affecting workers must implement ISO 42001 or at least NIST - aligned management systems, pursue third-party certification within 24 months, integrate with ISO 27001 information security management, establish governance committees with worker representation, maintain audit trails demonstrating compliance, and report annually on AI governance maturity.

That would have been actual governance.

Instead, they published a framework asking workers to:

- Write better prompts

- Evaluate outputs carefully

- Use AI responsibly

- Protect sensitive information

- Maintain accountability

While AI companies:

- Default to conversation monetization

- Require manual opt-out from targeting

- Use chat history as advertising inventory

- Face zero regulatory constraints

- Optimize systems for revenue, not safety

The Bottom Line

Europe built a regulatory framework that prohibits harmful AI applications, requires providers to prove safety before deployment, mandates transparency and human oversight, and enforces compliance with billion-euro penalties.

America published voluntary guidance asking workers to learn prompt engineering and use AI responsibly.

Six days before the DOL framework dropped, OpenAI demonstrated exactly why that approach fails by launching ads with conversation monetization on by default.

ISO 42001 proves that systematic AI governance frameworks exist, they're internationally recognized, and they work. The DOL chose not to require them.

This isn't a policy disagreement. This is a fundamental choice about who should bear the risk when AI systems cause harm.

Europe chose to regulate providers. America chose to educate workers.

One prevents harm. The other documents it.

What You Can Do Now

If you're serious about AI governance:

Implement ISO 42001. Get certified. Build the management systems you'll need when regulations inevitably follow. Your EU competitors already started. Your enterprise buyers increasingly require it. Your liability insurers will thank you.

If you're using AI systems at work:

Check those settings. Free ChatGPT monetizes conversations by default. Your work discussions are advertising inventory unless you opted out. The DOL says that's your responsibility to manage.

If you're making compliance decisions:

Stop treating voluntary frameworks as if they're regulatory compliance. The EU AI Act is law. ISO 42001 is systematic governance. The DOL framework is non-legally binding guidance.

Build for the regulation that's coming, not the suggestion that's here.

If you're watching AI policy:

Notice who bears the risk in each framework.

Notice who profits from the business models.

Notice who faces penalties for violations.

Then ask yourself why America keeps choosing education over enforcement.

The answer tells you everything about who this system is designed to protect.

Spoiler: It's not the workers learning to write better prompts.

Want the frameworks nobody else is talking about? Subscribe to What Just Happened and Why You Should Care for the AI governance analysis that actually matters.

Share this article

Related Articles

The Reskilling Illusion: When AI Transformation Means "You're Fired"

Oct 03, 2025