CMS Just Handed Medicare's Coverage Decisions to AI Companies That Profit from Denials

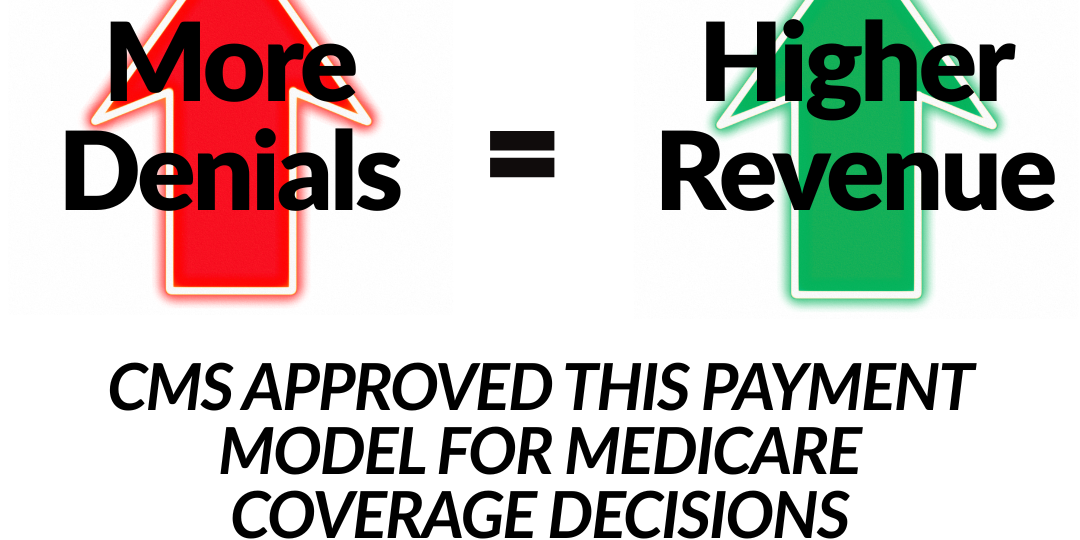

Model participants get compensated based on their share of averted expenditures. Net result? The third-party companies reviewing your prior authorizations earn more money when they deny more services. CMS buried this payment structure in the middle of the notice, framed as a payment approach that tests whether technology companies can "help streamline the review of medical necessity."

AI providers (“Model Participants”) will get compensated based on their share of averted expenditures.

Read that carefully, we’ll come back to it in a moment.

The federal government launches a six-year experiment in algorithmic healthcare rationing on January 1, 2026. They're calling it waste reduction, but the payment structure tells a different story.

On July 1, 2025, the Centers for Medicaid and Medicare Services (CMS) published a Federal Register notice announcing the Wasteful and Inappropriate Service Reduction Model (WISeR). The document runs 5 pages and contains one detail that should alarm every Medicare beneficiary, healthcare provider, and patient advocate in America:

Model participants get compensated based on their share of averted expenditures.

Net result? The third-party companies reviewing your prior authorizations earn more money when they deny more services.

CMS buried this payment structure in the middle of the notice, framed as a payment approach that tests whether technology companies can "help streamline the review of medical necessity" (p. 28751).

This is institutionalized conflict of interest with federal approval.

The Model Structure: What CMS Actually Announced

As of January 1, 2026, WISeR operates in six states: New Jersey, Ohio, Oklahoma, Texas, Arizona, and Washington and runs through December 31, 2031. CMS selected these locations based on Medicare Administrative Contractor (MACs) jurisdictions, service volume, and states with existing Local Coverage Determinations for skin and tissue substitutes.

WISeR covers 17 services (p.28751) including electrical nerve stimulators, sacral nerve stimulation, deep brain stimulation, epidural steroid injections, cervical fusion, skin and tissue substitutes, and hypoglossal nerve stimulation for sleep apnea. CMS selected these services based on "existing evidence of potential FWA" and "alignment with definitions and evidence around services defined as low value by Schwartz et al." as well as "services already subject to prior authorization in MA at the time.”

Providers can submit prior authorization requests to either the Medicare Administrative Contractor or directly to the model participant. Model participants must decide within 72 hours for standard requests and 48 hours for expedited requests (CMS WISeR Model FAQ, 2026). If providers don't submit prior authorization, claims automatically route to pre-payment medical review by the same model participants.

CMS states that model participants will use "AI and machine learning" combined with clinician review (p. 28750). The notice requires that "an adverse medical necessity decision related to a prior authorization request must be reviewed by a physician or other health care professional with appropriate expertise before" the model participant issues the decision.

The Payment Problem: When Algorithms Earn Money from Denials

The Federal Register notice states model participants "will receive a percentage of the savings associated with averted wasteful, inappropriate care as a result of their reviews" with "that percentage" adjusted "based on the participant's performance on measures related to the process, including provider experience" (p. 28750).

This payment structure creates direct financial incentive to deny claims. When companies profit from rejecting services, the math becomes simple. More denials equal higher revenue. CMS conducted "market research from various different MA organizations that had experience with enhanced technology-enabled prior authorization processes" and found that "some MA plans reported decision time to prior authorization approval being almost instantaneous for services with very clear clinical coverage criteria" (p. 28750).

But what the document doesn't address is denial rates or accuracy.

The Data on Prior Authorization Harm

The evidence on prior authorization causing patient harm is substantial and growing. A 2024 AMA survey of 1,004 physicians found that "24% of physicians reported that prior authorization has led to a serious adverse event for a patient in their care, including hospitalization, permanent impairment, or death." The same survey found "94% reported that prior authorization delays access to necessary care" and "78% reported that patients abandon treatment due to authorization struggles."

A 2025 systematic review published in The American Journal of Medicine analyzed 25 studies and found that "in addition to care delays, authorization requirements were associated with disease exacerbation, preventable hospitalization, prolonged hospital stay, and lower rates of disease-free survival" spanning "oncology, cardiology, behavioral health and pediatrics."

In cancer care specifically, an ASTRO survey found 92% of radiation oncologists reported prior authorization delays, with 68% reporting delays lasting 5 days or longer, "a significant increase from 52% in 2020." One-third of physicians reported patients abandoning radiation treatment due to prior authorization barriers. The survey documented cases where prior authorization led to patient deaths.

Johns Hopkins researchers reviewing prior authorization evidence stated that "delays caused by prior authorization requirements result in harm across populations and health plans" and noted that "in oncology, even delays of one to three weeks in starting guideline-based treatments correlates with worse control of disease and lower survival."

The 2019 HHS Office of Inspector General study "estimated that treatment by health care providers who were subsequently prosecuted for fraud and/or abuse contributed to as many as 6,700 premature deaths among Medicare FFS beneficiaries."

Demographic Disparities in Prior Authorization

Let’s be real and honest. Prior authorization does not affect all patients equally.

A 2024 study published in The American Journal of Managed Care analyzed 18,041 cancer patients and found "the average prior authorization denial rate was 10%, and the denial rate specifically due to no medical necessity was 5%" with "Hispanic patients had the highest prior authorization denial rate (12%)" compared to "Black patients had the lowest prior authorization denial rate (8%)."

A study of gynecologic oncology care found that "37% of patients who identified as Asian experienced prior authorization requirements, compared to 20.1% of those who identified as Black, 17.9% of Hispanic patients, and 23.1% of white patients."

Research published in JAMA Network Open in 2024 examined 1,535,181 patients seeking preventive care and found that "at-risk populations, including low-income patients, patients with a high school degree or less, and patients from minoritized racial and ethnic groups, experienced higher rates of claim denials."

A 2022 HHS Office of Inspector General report found that "13% of prior authorization denials in MA would have been approved under TM" raising concerns about beneficiary access to medically necessary care.

A KFF Health Tracking Poll from September 2025 found that "73% of adults say that delays and denials of health care services by health insurance companies are 'a major problem.’” Among insured adults who needed prior authorization in the past two years, "48%" reported their "health insurance company has delayed their ability to get" services and "43%" reported denials of "a service, treatment, or medication that their doctor requested." Only about 1% of denied claims are appealed, despite high rates of denials being overturned when challenged.

AI Bias in Healthcare Coverage Decisions

Research demonstrates that AI systems can and do perpetuate as well as amplify existing biases in healthcare – at scale.

Multiple sources report that "a growing body of research has found AI can reinforce bias that's found elsewhere in medicine, discriminating against women, ethnic and racial minorities, and those with public insurance.” Pennsylvania State Representative and emergency room physician Dr. Arvind Venkat stated that "the conclusions from artificial intelligence can reinforce discriminatory patterns and violate privacy in ways that we have already legislated against.” UnitedHealthcare, which covers 49 million Americans, denied nearly one-third of all in-network claims in 2022 representing "the highest rate among major insurers" according to federal data.

States are responding to AI bias concerns. Arizona, Maryland, Nebraska and Texas, for example, have banned insurance companies from using AI as the sole decisionmaker in prior authorization or medical necessity denials. California enacted legislation in 2024 that prohibits health care coverage denials from being made solely by AI without an ultimate human decision-maker.

The National Association of Insurance Commissioners adopted a Model Bulletin on AI use in 2023, requiring insurers to "evaluate AI systems for potential bias and unfair discrimination in regulated processes such as claims, underwriting and pricing" and to "proactively address them."

The Overtrust Problem: Why Patients Cannot Detect Dangerous AI Medical Advice

The danger of AI-driven healthcare decisions extends beyond bias. Research published in NEJM AI in May 2025 reveals a pattern that makes WISeR's payment structure particularly dangerous: people cannot distinguish AI-generated medical advice from physicians' responses and demonstrate alarming levels of trust in inaccurate AI recommendations.

In a study of 300 participants evaluating medical responses from both doctors and AI systems, researchers from MIT and Stanford found that "participants could not effectively distinguish between AI-generated responses and doctors' responses" achieving only 50% accuracy in identifying the source, essentially random chance.

The study evaluated 150 AI-generated medical responses using GPT-3. Physicians rated 44% of these responses as having low accuracy. Yet when presented to non-expert participants, these inaccurate responses "performed very similarly to doctors' responses" across metrics of validity, trustworthiness, and completeness.

Participants rated low-accuracy AI responses as "valid, trustworthy, and complete/satisfactory" and "indicated a high tendency to follow the potentially harmful medical advice and incorrectly seek unnecessary medical attention as a result of the response provided" with a "problematic reaction" that was "comparable with, if not stronger than, the reaction they displayed toward doctors' responses.”

The researchers concluded that "the increased trust placed in inaccurate or inappropriate AI-generated medical advice can lead to misdiagnosis and harmful consequences for individuals seeking help" and warned that "overrelying on false or incomplete AI-generated responses could lead to delayed or inappropriate treatment, potentially worsening health outcomes and even endangering lives."

This creates a dangerous combination when layered onto the WISeR payment model. Model participants profit from denials. If patients cannot detect when AI recommendations are inaccurate, what about other clinicians?

The MIT and Stanford researchers found that even physicians showed bias in evaluating AI responses. When experts knew responses were AI-generated, "they evaluated the AI-generated responses as significantly lower in all three metrics: accuracy, strength, and completeness." But when the same experts evaluated responses without knowing the source, "there was no significant difference in their evaluation" between AI and physician responses. This confirms what the researchers called "a bias presented by experts against AI-generated responses when the source of the response is indicated."

For WISeR, this means model participants' AI systems could generate inaccurate recommendations that clinicians trust and follow, while the profit structure incentivizes maximizing denials regardless of accuracy or patient impact.

The Gold Card Exemption: Normalizing 10% Denial Rates

CMS states they are "exploring implementation of 'gold carding'" to exempt compliant providers from prior authorization. The Federal Register notice indicates "a provider/supplier could be exempt from prior authorization if they achieve a prior authorization provisional affirmation threshold of 90 percent during a periodic assessment, thereby demonstrating a sufficient understanding of the requirements for submitting an accurate claim."

This policy explicitly accepts that a 10% denial rate represents acceptable performance. The notice states "100 percent compliance may not be necessary as there could be unintentional or sporadic errors that could occur that are not deliberate or a result of issues out of the provider's/supplier's control.”

The exemption can be withdrawn "if evidence became available, based on a review of claims, that the provider/supplier had begun to submit claims that are not payable based on FFS Medicare's billing, coding, or payment requirements during a periodic assessment."

This creates pressure for providers to maintain 90% approval rates or face increased scrutiny. Providers treating complex patients or attempting appropriate but edge-case treatments risk losing exemption status.

Privacy and Oversight Questions CMS Didn't Answer

The Federal Register notice states (p.28753) that model participants will function as "business associates" under HIPAA, with "a business associate relationship" established "between CMS's Medicare FFS health plan and the model participants which will be documented through valid business associate agreements (BAA) that comply with the requirements of the HIPAA Privacy Rule."

The notice provides no specifics on:

- Which companies will serve as model participants

- What AI systems they will use and how

- How algorithms will be audited for bias

- What happens when AI recommendations are wrong

- How demographic patterns in denials will be monitored

- Whether claims data will be used to train commercial AI models

The document states "CMS is also exploring implementation of 'gold carding'" and notes "more details regarding the exemption process will be shared in the future.” These and other critical implementation details remain undefined and its February 2026.

What This Means for Medicare Beneficiaries and Providers

"Starting on January 5, 2026, for dates of service on or after January 15, 2026, select items and services covered under Original Medicare will be subject to prior authorization or pre-payment medical review" (CMS WISeR Provider and Supplier Operational Guide, 2025). Prior authorizations remain valid for 120 days from approval.

Providers who don't submit prior authorization will have claims flagged for pre-payment review where model participants request documentation. Providers have 45 days to respond. Claims denied for payment can be appealed through existing Medicare administrative appeal processes.

Medicare beneficiaries retain freedom to seek care from their provider of choice. Coverage policies don't change under WISeR. But access may be affected by delays, denials, and provider decisions about whether to offer services subject to prior authorization requirements.

The model affects approximately 6.4 million Americans on traditional Medicare in the six pilot states.

The NEJM AI research reveals why WISeR's structure is particularly dangerous. The study found that responses deemed low accuracy by physicians may still be highly persuasive to laypeople, highlighting the danger in generating and releasing AI-generated medical responses to the public without doctor supervision. Yet WISeR places AI systems operated by profit-motivated third parties between patients and coverage decisions, with vague oversight requirements.

The researchers warned that their findings "expose a number of key considerations that need to be consistently evaluated, from the perspective of both the layperson and the physician, when designing and deploying technologies such as LLMs and chatbots in medical response applications.” WISeR deploys exactly such systems, but the Federal Register notice addresses none of these considerations.

The Unanswered Questions

CMS didn't address several critical issues:

- What are the demographic breakdowns of approvals versus denials?

- How will algorithmic bias be detected and corrected?

- Who is liable when AI systems make incorrect determinations?

- What patient outcome metrics beyond cost reduction will be tracked?

- How will the model prevent the documented harms associated with prior authorization delays?

- Given research showing patients cannot detect inaccurate AI medical advice, what safeguards prevent harm when profit-motivated algorithms make coverage decisions?

The Federal Register notice cites the 2021 evaluation of the Medicare Prior Authorization Model for Repetitive Scheduled Non-Emergent Ambulance Transport (RSNAT), stating that "evidence demonstrates that under the Medicare Prior Authorization Model for RSNAT, there was no adverse effect on quality of care or access to care.”

But RSNAT involved ambulance transportation, not complex surgical procedures, chronic disease management, or cancer treatment. The services in WISeR carry substantially different clinical implications. And the MIT and Stanford research demonstrates that AI-generated medical recommendations pose documented risks that ambulance scheduling decisions do not: patients will trust and follow inaccurate AI advice at rates comparable to following physician recommendations.

What You Should Do

- If you're a Medicare beneficiary in New Jersey, Ohio, Oklahoma, Texas, Arizona, or Washington, understand which services require prior authorization.

- Ask your providers about their experience with the WISeR system once it launches.

- Document any delays or denials.

- Recognize that research shows you may not be able to distinguish accurate from inaccurate AI-generated recommendations, so insist on physician oversight of coverage decisions.

- If you're a provider, track your approval rates, denial patterns, and time spent on prior authorization.

- Document cases where prior authorization delays harm patients.

- Consider whether the administrative burden outweighs the benefit of offering services subject to WISeR requirements.

- Understand that your patients cannot reliably detect when AI systems provide inaccurate guidance, making your role as their advocate more critical.

- If you're a patient advocate or healthcare organization, demand transparency on model participant identities, AI systems used, demographic patterns in denials, and patient outcomes beyond cost savings.

- Request independent audits of algorithmic bias.

- Push for accountability mechanisms before the system becomes permanent.

- Cite the NEJM AI research showing patients overtrust inaccurate AI medical advice when challenging WISeR's lack of safeguards.

- If you're a policymaker, ask the questions CMS didn't answer.

- Require public reporting on denial rates by service type, provider characteristics, and patient demographics.

- Mandate independent oversight of AI systems.

- Establish clear liability when algorithms make incorrect determinations that harm patients.

- Address how WISeR will prevent the documented harm that occurs when patients trust and follow inaccurate AI-generated medical recommendations.

The WISeR model is now live. Most Medicare beneficiaries don't even know it exists. The Federal Register notice appeared on July 1, 2025. Public comment periods have closed. Implementation moved forward; and here we are.

The question isn't whether this model will reduce Medicare spending. When you pay companies based on denials, costs go down. The question is what happens to patients who need care that falls on the wrong side of the algorithm. Research proves patients will trust and follow inaccurate AI medical advice. WISeR gives profit-motivated companies control over those algorithms. And CMS provided no safeguards against the documented risks.

Because once algorithmic rationing becomes normal in traditional Medicare, there's no limiting principle that prevents expansion to more services, more states, and eventually the entire program.

The precedent matters more than the pilot.

Share this article

Related Articles

The Reskilling Illusion: When AI Transformation Means "You're Fired"

Oct 03, 2025